Democracy’s Last Stand

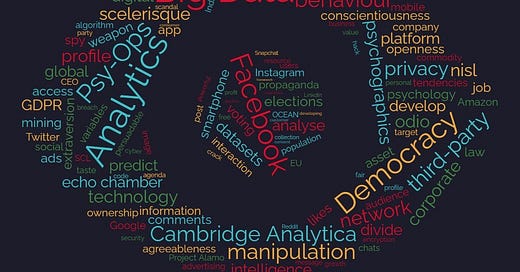

In the age of big data analytics and psychographic manipulation democracy itself is at stake

Disclaimer: This is a private blog. Any views or opinions represented in this blog are personal and belong solely to the author. All readers are encouraged to critically analyse the opinions, arguments and facts put forward in the article in order to form an independent opinion.

We live in a world where over 2.65 billion people are plugged into the virtual reality of social media, constantly active, sometimes even more than they are in the real world. It is a world of its own, supposedly built with the intention of connecting us as a species at an international level; and it is constantly growing. We are now more connected than ever before. At the mere click of a button we can send a message to any person on Earth. People now enjoy unprecedented access to information that was inconceivable just a couple of decades ago. For billions, the digital transformation for which the smartphone is synonymous, has brought enormous benefits and has made their lives infinitely easier.

We have become so comfortable with its convenience that we have ceased to stop and reconsider the ramifications of reaping the benefits of this seemingly free resource. Have you ever paused to ask yourself, “How is it that I can use all these services without paying any money for them? And if I am using them for free, then how come the service providers such as Google, Facebook and Amazon are among the richest companies in the world? Where do they make their money from?”

We have become so comfortable with its convenience that we have ceased to stop and reconsider the ramifications of reaping the benefits of this seemingly free resource.

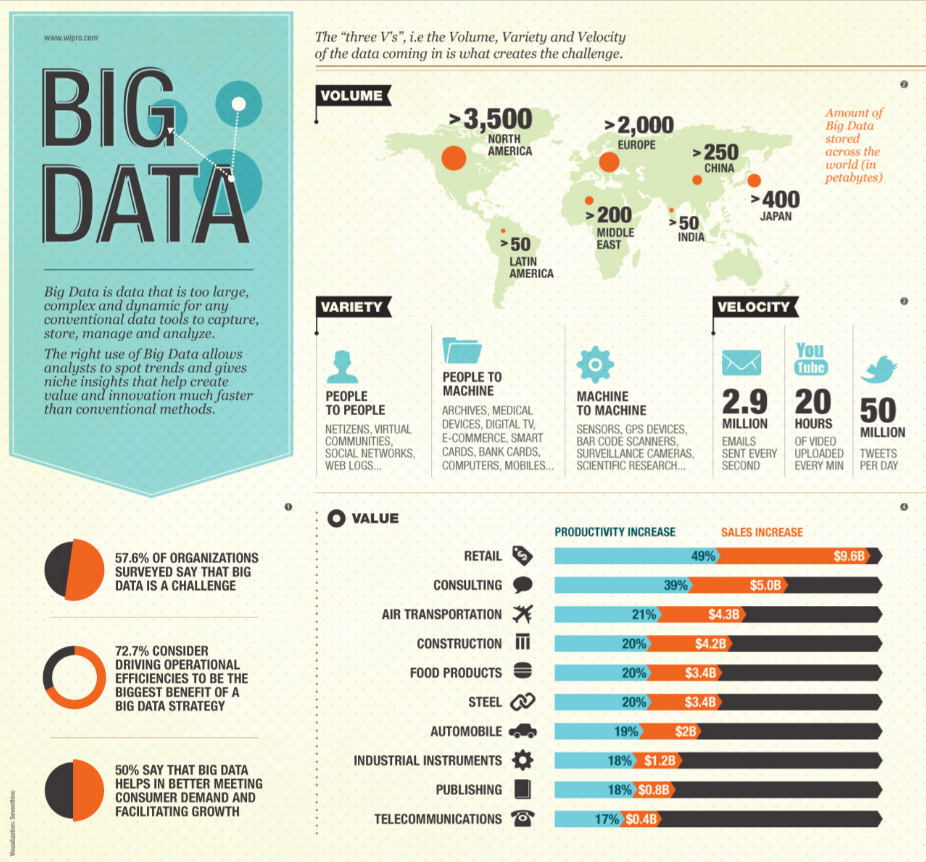

The answer to this question is data. In 2016 the asset value of data actually surpassed that of oil and currently data is the most valuable economic asset in the world.[1][2] Just as in the 1970s there was heated international debate with regard to the ownership and right to natural resources such as oil, currently a similar debate currently ensues with regard to the ownership of data.[3] The companies that provide us with these ‘free services’ actually make money by monetising the data they harvest directly from us. And because data is so valuable, these companies are so rich.

But to understand the significance of this we must first understand what we mean by data and why it is so valuable. Data has enormous potential and can be used both as an indispensable tool and a formidable weapon, as is the case with most new technologies. We have seen how the emergence of new technologies has led to both their constructive use and their weaponization, from the discovery of fire to the harnessing of nuclear power. However, the misuse of data poses a threat more subtle than death. Instead it threatens to bring about something far more terrifying – an existence in fear. Data actually poses a threat to democracy itself.

When you use the internet for everyday things such as watching cute cat videos, chatting with your friends, posting online about your latest vacation, listening to the latest track by Taylor Swift or liking your favourite celebrities posts, you leave behind a trail of data, like footprints in sand. However, these footprints don’t simply fade away. The platforms and forums we use collect this data and create a data set for every individual. This data can be about what you eat, what you read, how you dress, move, respond and react.

The data is then fed to specially trained algorithms which analyse it to create a personality profile that can be used to predict your behaviour, choices, actions and most importantly susceptibilities. The personality profile created is known as an OCEAN profile, which stands for Openness, Conscientiousness, Extraversion, Agreeableness and Neuroticism.[4] Each person rates differently on each variable and the idea is that all the tiny choices you make online, once collected and analysed become highly invasive and are effective indicators of your tendencies. It is these susceptibilities that are exploited by companies such as Google and Facebook in order to make a profit. They sell the information of personal behaviour and tendencies to advertisers and other third-party data analysers which use it to mould their target audiences’ opinions and behaviour at a psychological level to increase their own profits as well.

The significance of this is huge. We have now come to realise something extremely important – we are no longer the customers of firms such as Google and Facebook. Instead we are now the commodity that they sell to make a profit! We are their product. The end of our privacy isn’t just someone else knowing about everything we do. It is someone else owning our every movement and utterance, and then directing our lives towards their profit.

We are no longer the customers... We are now the commodity!

Even so some may argue – “So what if my data is being harvested? Isn’t it more convenient for me to receive content that is personalised to my tastes? If my data is being used to make my life easier then there is no problem.” That argument does hold true to some extent, however, at some point it turns sour. And that point is elections. In 2018 Facebook CEO Mark Zuckerberg was brought before the US Congress and faced intense questioning with regard to the misuse of the data of 87 million Facebook users to swing the United States 2016 elections.[5] The firm was also investigated by the British Government because of their involvement with the Brexit Campaign of the same year. The investigations lasted for months and resulted in a fine of only £500,000 to be paid by Facebook, not to mention international defamation and a media firestorm. Facebook’s share price plunged by over $50 billion and it has now fallen over twice that amount.

However, Facebook was not the worst hit by this scandal. The company that was primarily blamed for the scandal was called Cambridge Analytica. Cambridge Analytica is (in the words of the ex-CEO) a “behaviour change agency” formalised in 2013.[6] The firm was started by a former military contractor known as the SCL Group. Prior to the formation of Cambridge Analytica the SCL Group specialised in a field known as Psy-Ops (Psychological Operations) which was a method of communication warfare. It involved persuading hostile audiences in conflict zones that your enemies are also their enemies. For example, they would collect data on say 14-30 year old Muslim boys in Afghanistan and research the best possible way to influence them not to join the Taliban or Al-Qaeda. These influencing tactics were refined and perfected until they could accurately predict the audience's response to a certain message and they could home in on the most effective influencing method to successfully convey their message. By the time Cambridge Analytica was founded, they already had the power to actively change the behaviour of a population to adhere to a certain ideal or value they propagated, using only data collected from the population. And most importantly they could do this without the knowledge of their audiences.

It immediately became apparent that their influencing tactics would be very useful to election campaigns, the aims of which are also to essentially change the behaviour of a population to vote for a certain candidate. So Cambridge Analytica seized the opportunity and began work for election campaigns across the world. Their methods were an outrageous success. They worked in the following countries for political campaigns[7]:

Argentina, 2015

Malaysia, 2013

Kenya, 2013

Nigeria, 2015

India, 2010

Trinidad and Tobago, 2009

Italy, 2012

Colombia, 2011

Antigua, 2013

St. Kitts and Nevis, 2010

United States of America, 2016

United Kingdom (Brexit), 2016

and many more...

Cambridge Analytica’s work in the Leave. EU campaign and the 2016 Trump campaign (Project Alamo) was particularly successful. They collected Facebook data harvested using special apps designed by Cambridge data professor, Aleksandr Kogan.[8] The apps allowed Cambridge Analytica to harvest not only the data of the app user but also the data of the user’s friends circle on Facebook. This meant that only a few thousand people had to be paid a small amount of $2-5 in order to collect the data of the whole population. This data was then used to create an OCEAN profile which was fed into a special algorithm which sorted people on the basis of predicted vote.

After the population’s political inclinations had been identified, only some of them were targeted (This is important to note because it tells us that micro-targeting is not an unbiased system and it affects people unequally).[9] The people who were targeted primarily comprised of individuals without any strong political affiliation who could be influenced to vote one way or the other. These people were termed the “Persuadables”. Amongst the Persuadables too, only some were focussed on. These were the Persuadables that lived in “swing states” that had a major impact on the final outcome of the election. This turned out to be an effective strategy, because according to David Carrol, Professor of Data and Advertising at Parsons School of Design, the outcome of the 2016 USA elections was decided by only 70,000 people across 3 states. This meant that by bombarding only 70,000 Persuadables in the right states with news, advertisements and articles tailored to change their individual opinions, Cambridge Analytica could bring the candidate they worked for – Donald Trump – to power.

According to Carole Cadwalladr of the Guardian, who studied Cambridge Analytica for over a year[10]:

“A few dozen “likes” can give a strong prediction of which party a user will vote for, reveal their gender and whether their partner is likely to be a man or woman, provide powerful clues about whether their parents stayed together throughout their childhood and predict their vulnerability to substance abuse. And it can do all this without any need for delving into personal messages, posts, status updates, photos or all the other information Facebook holds.”

Extremely subtle changes would be made to political ads in order to influence people on the basis of their OCEAN profile. For example, if Cambridge Analytica was writing the caption for the ad of a political candidate promising jobs, they would frame the question differently for each individual. If a person rated highly on the conscientiousness scale, they would write something along the lines of “Jobs are an opportunity to succeed”. However, if someone else was high on the neuroticism scale, their sentence would say “Jobs offer financial security”.[11] These subtle changes which may be as minor as changing the arrangement of words in a sentence or changing the shade of the background of an image often go unnoticed by us user. But in reality they build up and have a profound impact on our subconscious minds. Over time these changes can result in a change in behaviour, opinions and finally voluntary actions such as voting.

Cambridge Analytica’s operations finally came to an end after the Facebook ‘data breach’ scandal came to light. They filed for bankruptcy and shut down permanently in 2018. The UK parliament published a report in 2019 declaring Facebook and other social media giants “digital gangsters”. The report also claimed that Britain’s election laws were no longer satisfactory for conducting a free and fair election. The fact is that the types of technologies used by Cambridge Analytica are still out in the public domain and continue to be used in democratic political campaigns to play with the psychology of entire populations without their knowledge or consent. Even as recent as the 2019 general elections here in India, almost every major political party used big data and psychographics as tools of propaganda to fulfil their political agendas.[12] Under such circumstances we must ask ourselves, “Is it still possible to conduct a free and fair election? Or are elections simply going to be won by the party that hires the best data team that money can buy?”

The fact is that technologies such as the ones used by Cambridge Analytica are still out in the public domain and continue to be used in political campaigns to play with the psychology of entire countries without their knowledge or consent, within the democratic process.

In our neighbouring country Myanmar, Facebook was used to incite racial hatred and violence against the Rohingyas which ultimately led to a genocide. The current Brazilian right-wing extremist president Jair Bolsonaro was elected due to the dissemination of fake news on WhatsApp.[13] Even down under in Australia where bushfires laid waste to large parts of the country earlier this year, disinformation and lies spread across social media made the job of environmentalists even harder in the already grave situation.[14]

Technology by itself is politically neutral, however, it has been quickly politicised globally. Authoritarian states have learnt how to use surveillance technologies, AI and mass data to their advantage in order to gain domestic control and to erode democratic societies abroad.[15] For democracies whose cohesion is based on the sovereignty and consent of their citizens, this represents an unprecedented challenge. How democracies approach this challenge will likely be a key factor in their performance, given intensifying competition among political systems. If democracies give in to the advent of such technologies, it could mean the death of democracy itself.

According to the Guardian, by 2025 most of us will have maybe over 70,000 data points defining each of us digitally, and currently here in India we have no real right or control over how it is used and who it is used by. To put that into perspective, Cambridge Analytica only used about 5,000 data points each to swing the US 2016 elections.

In India, there have been attempts to pass a data protection bill and in December of 2019 we received our first such act, however it was a complete farce. According to B.N. Srikrishna, a retired Supreme Court Judge, the bill drafted by the Indian Data Protection Authority left out all the safeguards suggested by the panel and data can continue to be accessed without citizen’s consent, which paradoxically is what the act was actually meant to eradicate.[16] Data protection and data rights are necessary for us to fight for as responsible citizens of the global cyber network, and it is up to us to put pressure on administrations to pass legislation for the same. This is democracy’s last stand, and only we can decide the outcome of this battle.

Sources:

[1] https://www.economist.com/leaders/2017/05/06/the-worlds-most-val

[2] [6] [7] [9] [13] https://www.netflix.com/in/title/80117542

[3] https://www.weforum.org/agenda/2019/10/3-reasons-why-data-is-no

[4] [8] [11] https://www.theguardian.com/news/2018/may/06/cambridge-analyti

[5] https://www.theguardian.com/uk-news/2019/mar/17/cambridge-anal

ytica-year-on-lesson-in-institutional-failure-christopher-wylie

[10] https://www.theguardian.com/technology/2018/mar/17/facebook-ca

[12] https://economictimes.indiatimes.com/news/politics-and-nation/cam

bridge-analytica-whistle-blower-reveals-india-operation-details-read

[14] https://www.theguardian.com/australia-news/2020/jan/12/disinforma

tion-and-lies-are-spreading-faster-than-australias-bushfires

[15] https://www.dbresearch.com/PROD/RPS_EN-PROD/PROD000000

0000497768/Digital_politics%3A_AI%2C_big_data_and_the_future

[16] https://www.thehindu.com/news/national/data-protection-bill-not-in-

line-with-draft/article30307560.ece

©️Ayaan Dutt, 2020